So I have been looking into MSIX AppAttach performance. Previous posts include one comparing publishing performance against normal MSIX and App-V, and another detailing where MSIX AppAttach publishing spends its time.

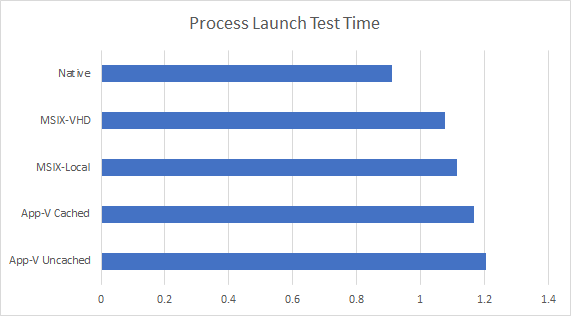

Today we look at a comparison of process launch times. In this test, I use a Win32 automated application that launches to a static GUI, sleeps for 5 seconds and shuts down. For each scenario I measure this for a thousand launches, substracting out the 5 second sleep time.

The scenarios include:

- A native exe launch on the local system.

- MSIX using AppAttach, so the exe is on a remote VHD.

- MSIX without AppAttach, so the exe is on the local system.

- App-V using Shared Content Store mode, so the exe streams over as needed.

- App-V with the exe fully cached on the local system.

The exe is quite small, so the advantages of local caching might be less than for typical applications.

Considering that we don’t hear complaints on App-V launch time, it looks like MSIX will be fine in any case. In fact, the margin of error on such tests run in the 250ms range, so all of the results should be considered close.

Especially since the MSIX Packages have the disadvantage of requiring PsfLauncher. That means that the time measured for that app included PsfLauncher starting up and then the target app. This was necessary so that we can get command line arguments, but since most traditional apps repackaged into MSIX require the PSF for production quality, that is probably actually quite fair.

But why is App-Attach faster than MSIX-Local? That is a mystery right now. The testing is performed on-prem to keep out uncontrolled influences that I might get on Azure. In my tests, the storage of the MSIX-VHD file was on the same SSD server share as that of the “local” VM (on Azure is wouldn’t be surprising to see the VM use a lower class of storage than the VHD to save money and that might change things). All tests involve 1000 launches and I ran each set twice so I don’t think it was how I tested, and at this point the results are good enough overall that it probably doesn’t matter.

So what is wrong with this test? Well, it is an overly simplistic scenario. It represents a “minimum” type of measurement, as in “what is the least amount of overhead involved” in launching an app.

- It does not attempt to measure a wide variety of packages that might have very different impacts.

- It does not attempt to measure for single-lock like issues that would be likely to show up in a multi-user OS with lots of user’s running applications in parallel, or otherwise consuming shared resources.

So we are only scratching the surface on MSIX performance at this point so as to get our bearings for future tests, by me or others. It’s a start!